Projects

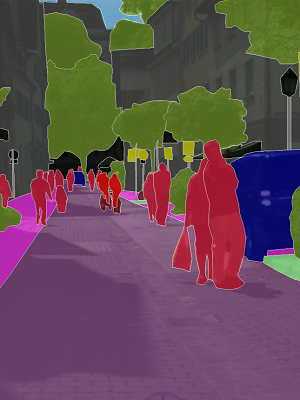

Semantic Segmentation with SWIN Transformers

• Implemented state-of-the-art using UNET, Transformers and transfer learning by fine-tuning model on 5k+ Cityscapes data

• Achieved significant improvement in mIOU score of 63%, utilizing SWIN attention residual mechanism with ML Perceptrons

Electricity Price Forecasting

• Forecasted daily and yearly prices using Timeseries analysis obtained by scrapping generation, consumption, weather data

• Feature engineered candidate variables using sliding window and applied Auto-Regression Differencing for reduced errors

• Achieved a low Mean APE of 9.69% for LSTM Model, outperforming the SOTA Kaggle model with 32% reduced (RMSE)

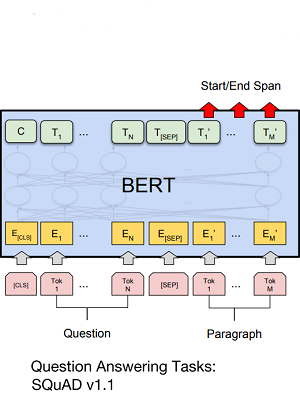

Question Answering model using BERT and derivatives

• Created a scalable QnA model by leveraging preprocessed Word2Vec, SIF embeddings on SQuAD v1.1 with 100K+ pairs

• Achieved high accuracy of 81% EM and 84.5% F1-Score by implementing Distil-BERT-BERT ensemble transformer model

Claim Prediction in Travel Insurances

• Developed an ensemble using boosted models to classify imbalanced claims data using feature selection and SMOTE analysis

• Utilized Flask framework to deploy the trained model as REST API, with prediction accuracy of 94.69% and F1-Score of 0.84